Goodwill Lab

Building better high-performance and quantum computing systems for science and society.

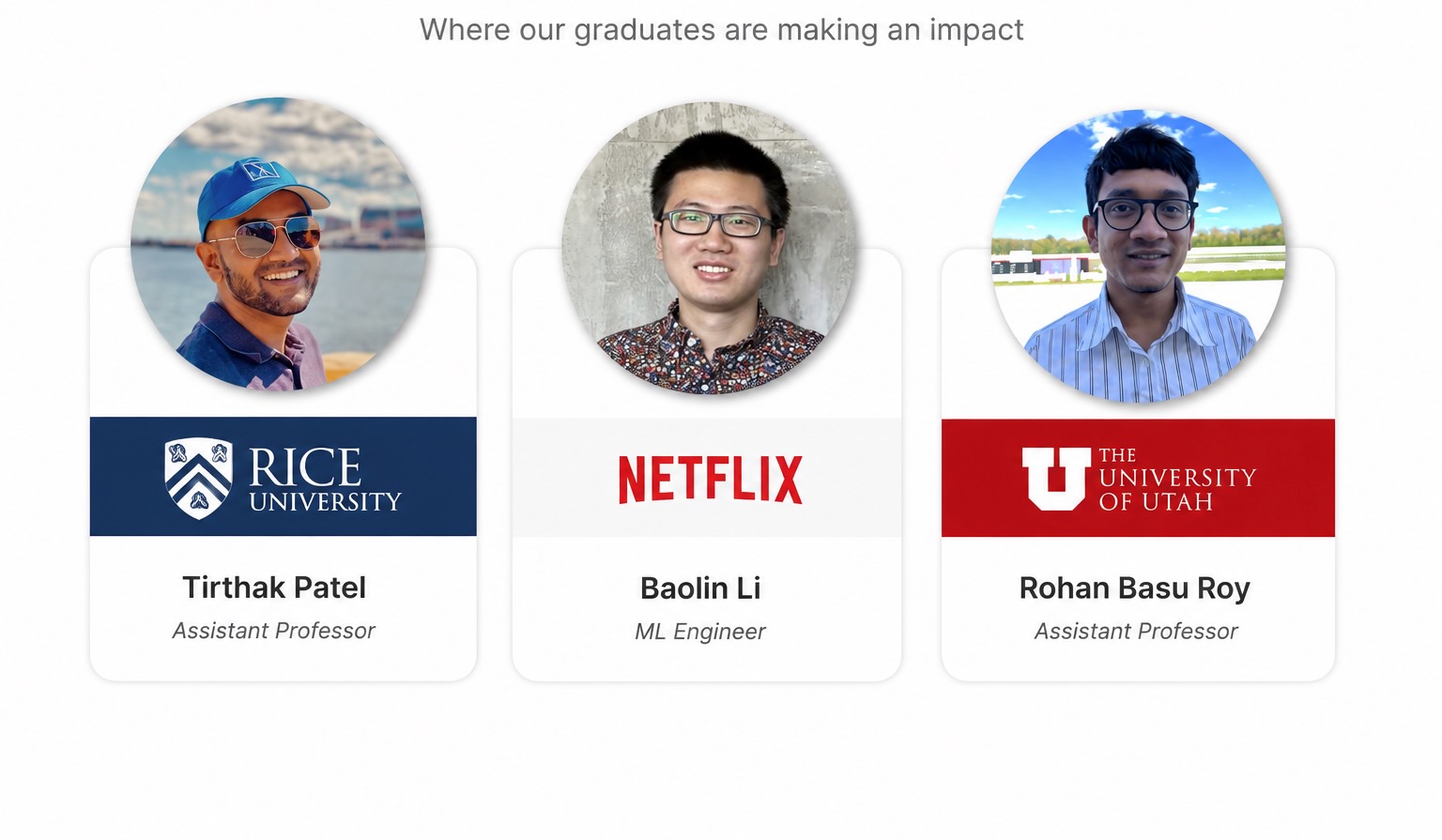

The Goodwill Lab develops high-performance computing (HPC) and quantum computing systems that are more efficient, accessible, and useful for solving problems of societal importance. We also prepare the next generation of students and educators through research, open-source artifacts, and outreach.

High-Performance Computing

Designing scalable systems, tools, and methods for modern scientific workloads.

Quantum Computing

Exploring emerging quantum systems and their role in future computing workflows.

Education & Outreach

Creating open-source artifacts and training opportunities for students and educators.